AI Literacy: What Companies Need to Know Now

Artificial intelligence is no longer a topic of the future, it is present in companies, public authorities and educational institutions. But those who use AI tools without understanding the underlying mechanisms risk making wrong decisions, legal liability and strategic blind spots. In this insight, you will learn what AI literacy means, why AI literacy has become a critical success factor, what practice-oriented frameworks look like and what concrete steps companies need to take today – in areas ranging from regulation to corporate culture.

Key Takeaways

- AI literacy refers to the ability to understand AI systems, critically evaluate them, use them responsibly, and actively incorporate them into co-creation processes, far beyond simply operating no-code tools.

- Article 4 of the EU AI Act obliges providers and operators of AI systems to provide verifiable AI training for their employees.

- Digital literacy and data literacy are no longer enough: Controlling probabilistic Large Language Models (LLM) requires a fundamentally new field of competence.

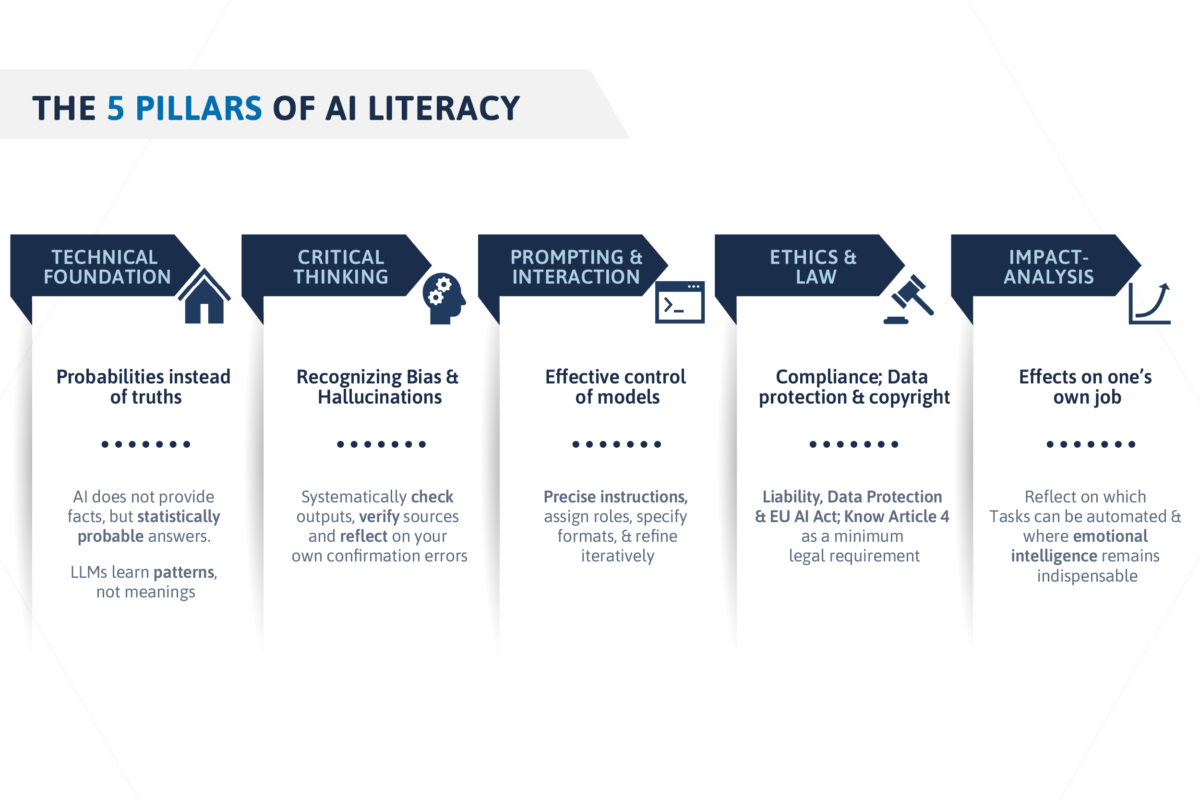

- AI literacy comprises five pillars: (1) technical foundation, (2) critical thinking, (3) prompting & interaction, (4) ethics & law, and (5) impact analysis.

- Organizations that systematically build AI literacy benefit from better human-AI collaboration, lower risks, and sustainable upskilling of their workforce.

What is AI Literacy: A Definition

AI literacy (or AI competence) refers to the bundle of knowledge, skills and attitudes that enable people to understand and critically reflect on artificial intelligence. The term goes beyond technical know-how: it is not about programming algorithms, but about understanding how AI systems work, what their limits are, and how they influence social, economic, and individual processes.

AI literacy is therefore an educational topic of the present. Anyone who wants to remain capable of acting in a working and living environment permeated by AI needs this field of competence. This applies to managers as well as to clerks, to developers as well as to teachers or the medical profession.

AI literacy is therefore not only a technical, but also a cultural, ethical and strategic field of competence. In AI science, it is increasingly being discussed as a key competence of the 21st century and defined by regulators such as the EU as a minimum legal requirement.

Differentiation: Why Digital & Data Literacy Are No Longer Enough

For a long time, it was true that those who can operate digital tools and read and interpret data are well positioned.

Digital skills (e.g. software operation, online tools) and data literacy (e.g. reading tables, interpreting diagrams, recognizing relationships) form important foundations – but they are not sufficient to handle modern AI systems safely.

The reason lies in the way AI works, especially Generative AI and Large Language Models (LLM). Conventional programs follow fixed rules: Input A leads to output B. AI systems work probabilistically – they calculate the most likely answer based on millions of trained patterns. The same input can produce different outputs, models can output plausible but factually incorrect content (“hallucinations”) and directly reflect bias from training data.

The core difference:

- Data literacy means understanding past facts.

- AI literacy means dealing with uncertainty, estimating probabilities, knowing model boundaries, and understanding the specifics of probabilistic systems.

This makes AI literacy an independent field of competence that goes beyond classic digital and data-related skills and is specifically geared to the new requirements in dealing with AI.

The 5 pillars of AI competence

AI literacy can be divided into five central areas of competence , which together provide a complete picture of the necessary AI competence:

1. Technical foundation: understanding probabilities instead of truths

Anyone who owns AI literacy understands the basic principle behind AI systems. These do not provide facts, but statistically probable answers. Large Language Models (LLM) such as GPT or Claude have been trained on huge amounts of text and learn patterns, not meanings. As a result, they are sometimes spectacularly wrong in their answers, without you seeing it in the text. This knowledge is the basis for all other skills: Only those who understand that AI does not provide truths can systematically question outputs.

2. Critical Thinking: Recognizing Bias and Hallucinations

AI systems are not neutral. They reproduce the biases and imbalances of their training data, which is called bias in AI. At the same time, large language models tend to hallucinate. They invent references, statistics or events that never took place, but formulate them with great persuasiveness. Critical thinking in the AI context means not accepting outputs, but systematically checking them, verifying sources and reflecting on one’s own confirmation bias. This skill is at the core of any true AI competency.

Prompting & Interaction: Effective Control of Models

Prompt engineering skills are the practical side of AI literacy: the ability to steer AI systems through precise, structured instructions in such a way that they produce useful outputs. Good prompting means providing context, assigning roles, specifying output formats, and iteratively refining them. This ability distinguishes people who use AI as sparring partners from those who treat them as oracles – with correspondingly different results.

Ethics & Law: Compliance, Data Protection and Copyright

AI ethics in the company is not only a moral question, but a legal one. What data can be entered into AI systems? Who is liable for AI-supported decisions? Does the use of generative AI violate copyrights? The EU AI Act, in particular Article 4, sets specific requirements for the AI competence of companies. Those who ignore this dimension risk compliance violations, data breaches and liability claims, especially in critical infrastructures.

5. Impact analysis: Assessment of the impact on one’s own job

AI literacy includes the ability to reflect on how AI is changing one’s work, which tasks can be automated, where human emotional intelligence and judgment remain indispensable, and how human-AI collaboration is changing one’s area of responsibility. This strategic self-reflection is crucial for individual resilience and organizational transformation.

AI Literacy Frameworks: Structure for Skill Management

For companies that want to systematically build up AI competence, established frameworks offer valuable guidance. They create a common language, help with inventory and provide a roadmap for structured upskilling

OECD AI Literacy Framework

The AI Literacy Framework OECD is one of the most cited international reference models for the development of AI competence. Developed as part of the OECD’s work on digital skills, it highlights four key areas:

- Understanding AI concepts and modes of operation,

- the critical examination of AI outputs,

- the ethical dimension of the use of AI, and

- active participation in the design of AI-shaped environments.

The framework is characterized by its international connectivity: It is compatible with the European Digital Competence Framework (DigComp) of the European Commission and has been integrated into national education standards of several OECD countries.

For companies, it offers a solid basis for developing their own competence profiles and making AI skills measurable. AI competence can thus not only be defined, but also promoted and tested in a structured way.

LEAD AI Literacy Model (Learn, Engage, Acknowledge, Develop)

The LEAD model is a practice-oriented framework that describes four consecutive levels of competence and is particularly suitable for corporate use:

- Learn: The first level is about understanding the basics and how AI works. What are Large Language Models? How does machine learning work? What does bias mean in AI? This basic knowledge is a prerequisite for all further competence levels and the starting point for any meaningful AI training.

- Engage: The second stage includes active practical use in one’s own department. Employees learn how to use AI tools for specific tasks, develop prompt engineering skills, and optimize workflows with AI support. This shows whether theoretical know-how is effective in real work contexts.

- Acknowledge: The third level is critical reflection: What are the limits and risks of AI? Where is there a risk of hallucinations, bias or data protection violations? When can AI outputs be trusted, and when not? This reflective competence is crucial for the responsible use of AI systems in companies and organizations

- Develop: The fourth stage goes beyond individual use: It is about developing AI strategies in a team, designing co-creation processes with AI and acting as a multiplier for AI competence in the organization. At this stage, true organizational AI sovereignty emerges.

The “Compliance Trap”: AI Literacy and the EU AI Act

Many companies consider AI literacy to be a “nice to have” – an educational offer for committed employees. With the EU AI Act, AI competence has become a legal obligation. Anyone who ignores this falls into a compliance trap that can have financial and legal consequences.

Article 4 of the EU AI Act: The Duty of AI Competence

EU AI Act Article 4 obliges providers and operators of AI systems to ensure that their employees have sufficient AI competence. The wording of the regulation is clear: companies must demonstrably ensure that people who use or supervise AI systems have the necessary knowledge and skills to use these systems safely and responsibly.

The AI training obligation for employees does not only affect IT departments or AI developers. It applies to all employees who come into contact with AI systems in the course of their work, from clerks who use AI-supported decision support to managers who use AI-based analyses for strategic decisions. The EU AI Act Article 4 requirements thus make AI competence a company-wide educational topic.

Liability and Risk: Why “Not Knowing” Becomes Expensive

The liability risks in the absence of AI literacy are considerable. If AI systems without sufficient human oversight lead to wrong decisions, for example in lending, medical diagnostics or personnel decisions, and the company cannot prove that the responsible employees were sufficiently trained, there is a risk of severe fines and civil law claims.

The situation is particularly delicate with black-box models: AI systems whose decision-making processes are not transparently comprehensible impose massive explanation obligations on companies. Those who cannot prove that employees know and understand the limits of such systems risk compliance violations. This is especially true in critical infrastructures such as healthcare, the financial sector or public administration, where the EU AI Act imposes particularly strict requirements.

AI literacy is therefore not only a competitive advantage, but also a liability protection.

Why AI literacy is particularly relevant for companies

Digitization has forced companies to fundamentally rethink processes, structures and business models. AI is accelerating this change in ways that many organizations underestimate. The proliferation of generative AI, no-code tools, and automated decision-making systems is changing not only individual tasks, but the entire value creation logic of companies.

At the same time, the EU AI Act creates a binding regulatory framework that forces companies to systematically deal with AI competence. Those who do not act here risk three dimensions of damage: (1) operational mistakes due to the uncritical adoption of AI outputs, (2) legal liability due to violations of EU regulation, and (3) the misuse of AI tools by employees who are unable to assess risks.

Target group-specific competence profiles

AI competence is not a uniform requirement profile – it must be tailored to the respective role. Three target groups can be clearly defined in the corporate context:

1. Employees at all levels

Employees at all levels need practice-oriented AI skills above all: increased efficiency through prompting and workflow integration, a basic understanding of AI systems, critical evaluation of outputs and sensitivity to data protection requirements. The aim here is to integrate AI safely and productively into everyday work.

2. Managers and decision-makers

Managers and decision-makers also need strategic foresight and governance skills: How is AI changing our value creation? What investments in AI infrastructure and AI competence are necessary? How do we ensure that AI decisions are ethical and regulatory? AI competence at this level means strategically managing the opportunities and risks of digitalization.

3. AI developers and IT and compliance teams

Finally, AI developers as well as IT and compliance teams are responsible for the security, architecture, and monitoring of AI systems. They need deep technical know-how, knowledge of AI science, understanding of bias and fairness in algorithms, and expertise in the EU AI Act and related regulations. For them, AI literacy is the prerequisite for responsible engineering.

Risks & Challenges

Building AI literacy in organizations is associated with typical challenges that companies should be aware of:

- Misunderstandings & over-trust: Many employees overestimate the reliability of AI outputs. Hallucinations are accepted as facts, bias in AI remains undetected. This over-trust is dangerous and can only be reduced through targeted training.

- Lack of standards and measurement methods: There are still no binding, uniform standards on how AI literacy should be measured. Companies must develop their own competency frameworks and update them regularly.

- Legal & Ethical Risks: AI ethics in business is complex and often unclear. Who is allowed to enter which data into AI systems? How is copyrighted content treated? These issues require clear policies and trained personnel.

- Cultural hurdles: Many companies are skeptical or afraid of AI. AI competence also means fostering a culture of critical but constructive use of new technologies. This takes time and consistent change management.

People with AI literacy: How do you recognize them?

AI literacy is not a certifiable trait that you acquire once and then own. It manifests itself in concrete behaviors and thought patterns. People with real AI competence can be recognized by the following characteristics:

- They understand that AI does not provide truths, but probabilities, and therefore systematically question outputs, for example through chain-of-thought prompting or targeted cross-checks.

- They can assess where AI can be used sensibly and where not, and use AI as a sparring partner, not as an oracle. They know that emotional intelligence, ethical judgment, and contextual understanding continue to be human domains.

- They know how a large language model works, what it can do and what is structurally beyond its capabilities, for example real understanding, causality or reliable factual accuracy.

- They reflect on their own role in interaction with AI and continuously ask themselves how human-AI collaboration influences their decisions, creativity and responsibilities.

- They question the influence AI has on language, thinking, decision-making processes and interpersonal relationships and take this social dimension seriously.

- They know the environmental and social costs of the technology: the energy consumption of AI data centers, the global supply chains for hardware, the working conditions in data annotation. AI literacy includes digital responsibility.

EFS Consulting AI Program Lead Ralph Zlabinger on AI Literacy: Responsibility and Judgement Instead of Just Serving

What always surprises me in conversations on the topic is that AI is often either overestimated or underestimated – rarely realistically assessed. Both are problematic.

For me, AI literacy means one thing above all: that people are able to use these systems sensibly and responsibly.

Anyone who only “operates” AI without understanding it is relinquishing decision-making authority. Those who use it sensibly gain decision-making space. That’s why AI literacy is less a technology topic than a question of responsibility, judgement and organizational maturity.

EFS Consulting Experts on Building & Measurement: The Roadmap to AI Sovereignty

How can companies systematically build up AI literacy and anchor it permanently? EFS Consulting recommends a three-step approach that combines technical expertise, cultural change, and measurable results.

The 3-Step Plan (Baseline → Enablement → Culture)

Stage 1 – Baseline:

It all starts with an honest inventory. AI self-assessments, competence tests and structured interviews are used to determine what knowledge about AI systems, prompt engineering skills and AI ethics is already available in the company and where critical gaps exist. The result is a company-specific competence profile that serves as the basis for all further measures.

Stage 2 – Enablement:

Based on the inventory, target group-specific AI training courses are developed and carried out. For employees, the focus is on prompting, workflow integration and critical evaluation of outputs. For executives, the focus is on strategic governance and EU AI Act compliance. For IT and compliance teams, it’s all about deep technical know-how, security architecture, and monitoring of AI systems. No-code tools and hands-on exercises ensure fast transfer performance.

Stage 3 – Culture:

Sustainable development of AI competence requires more than training. A corporate culture is needed in which critical thinking, continuous upskilling and open discussion of AI risks are a matter of course. EFS Consulting, together with Framechangers™, accompanies this cultural change through formats such as AI Communities of Practice, regular reflection sessions and the development of internal AI ambassadors.

Measurability of AI Literacy

What is not measured is not improved. EFS Consulting relies on a multidimensional measurement system for AI skills and AI competence that includes the following elements: standardized competency tests before and after training measures to measure learning progress, a role-specific skills matrix that makes the development status of each employee visible at a glance, KPI-based tracking of AI literacy initiatives at the team level, and regular AI self-assessments that promote self-reflection and self-responsible learning. Through this data-driven approach, AI literacy becomes a measurable, controllable corporate asset and no longer a diffuse educational topic.

Conclusion

AI literacy and AI literacy are not optional continuing education topics. They are the strategic answer to a profound transformation that encompasses all industries, functions and hierarchical levels. Anyone who understands how AI systems work, where their limits lie and what legal and ethical requirements apply can actually use artificial intelligence as a tool, not as a black box.

If you as a company want to know where your organization stands today and how you can build AI literacy in a targeted manner, we are the right partner for you. EFS Consulting accompanies companies from baseline analysis to the development of customized training programs to the cultural anchoring of AI competence – from initial orientation to sustainable AI sovereignty. Talk to us, we look forward to taking the next steps together with you.

FAQs

What is AI Literacy?

AI literacy – in German AI competence – refers to the ability to understand, critically evaluate and use artificial intelligence responsibly. It includes basic technical knowledge of AI systems and large language models, the ability to recognize bias and hallucinations, prompt engineering skills, and ethical and legal knowledge of AI.

Which skills are part of AI literacy?

AI skills in the context of AI literacy comprise five core areas: first, the technical foundation (understanding probabilistic systems), second, critical thinking (recognizing bias in AI and hallucinations), third, prompting & interaction (effective control of AI systems), fourth, ethics & law (compliance, data protection, EU AI Act), and fifth, impact analysis (assessing the impact on one’s own work). In addition, there are overarching competencies such as emotional intelligence in dealing with AI-altered work processes.

Why is AI literacy important?

AI literacy is important for three reasons: First, it protects against wrong decisions through uncritical adoption of AI outputs – keyword hallucinations and bias. Secondly, it has become a legal obligation for companies through the EU AI Act, in particular Article 4. Third, AI competence is the key to productive human-AI collaboration and thus to sustainable upskilling and competitiveness in a working world increasingly shaped by AI.

How can a company build AI literacy?

The best way to build AI literacy in the company is in three stages: First, a baseline analysis through AI self-assessments and competence tests to determine the current state of AI competence. Then a target group-specific enablement through practical AI training for employees, managers and IT teams. Finally, the cultural anchoring through communities of practice, internal AI ambassadors and continuous upskilling EFS Consulting accompanies all three stages – from strategy to implementation.